- Research

- Open access

- Published:

Asymptotic equivalent analysis of the LMS algorithm under linearly filtered processes

EURASIP Journal on Advances in Signal Processing volume 2016, Article number: 18 (2016)

Abstract

While the least mean square (LMS) algorithm has been widely explored for some specific statistics of the driving process, an understanding of its behavior under general statistics has not been fully achieved. In this paper, the mean square convergence of the LMS algorithm is investigated for the large class of linearly filtered random driving processes. In particular, the paper contains the following contributions: (i) The parameter error vector covariance matrix can be decomposed into two parts, a first part that exists in the modal space of the driving process of the LMS filter and a second part, existing in its orthogonal complement space, which does not contribute to the performance measures (misadjustment, mismatch) of the algorithm. (ii) The impact of additive noise is shown to contribute only to the modal space of the driving process independently from the noise statistic and thus defines the steady state of the filter. (iii) While the previous results have been derived with some approximation, an exact solution for very long filters is presented based on a matrix equivalence property, resulting in a new conservative stability bound that is more relaxed than previous ones. (iv) In particular, it will be shown that the joint fourth-order moment of the decorrelated driving process is a more relevant parameter for the step-size bound rather than, as is often believed, the second-order moment. (v) We furthermore introduce a new correction factor accounting for the influence of the filter length as well as the driving process statistic, making our approach quite suitable even for short filters. (vi) All statements are validated by Monte Carlo simulations, demonstrating the strength of this novel approach to independently assess the influence of filter length, as well as correlation and probability density function of the driving process.

1 Introduction

The well-known least mean square (LMS) algorithm [1] is the most successful of all adaptive algorithms. In its normalized version (NLMS), it can be found by the million in electrical echo compensators [2], in telephone switches, and also in the form of adaptive equalizers [3]. No other adaptive algorithm has been so successfully placed in commercial products.1 With a fixed step-size, starting at initial value w 0, the LMS algorithm is given by

Here, a reference model d k =w T u k +v k has been introduced, as is common for a system identification problem, assuming that an optimal solution w∈IRM×1 exists. It is further assumed that the observed system output is additively disturbed by real-valued, zero-mean, noise v k ∈IR of variance \({\sigma _{v}^{2}}\). The regression vector is u k ∈IRM×1, with M denoting the order of the filter. The algorithm starts with an initial value of w 0, trying to improve its estimate w k ∈IRM×1 with every time instant k. All signals are formulated as real-valued (i.e., ∈IR), which makes the derivations easier to follow. Although, in most cases, it is straightforward to extend the results to complex-valued processes if difficulties arise, results for the complex-valued case will be pointed out.

While deterministic approaches have proven l 2 stability for any kind of driving signal u k [4–7], results from stochastic approaches are restricted to specific classes of random processes (unfiltered independent identically distributed (IID) [8], Gaussian [9, 10], and spherically invariant random processes (SIRP) [11]). A recent historical overview is provided in [12]. Nevertheless, such stochastic analysis is useful since it provides information about how the speed of convergence and the steady-state error depend on the step-size μ. The resulting stability bounds [8–11, 13] are typically conservative:

and are based on fourth-order moments of Gaussian variables, which in turn can be expressed as second-order moments of the autocorrelation matrix R uu=E[u k u k T]. Furthermore, the derivation of this bound for stability in the mean square sense is based on the so-called independence assumption (IA), an assumption that also will be applied throughout this article.

In Section 2, it is demonstrated that an initial parameter error vector covariance matrix is forced by the LMS algorithm to remain a member of the modal space of R uu. However, this rule is not true in a strict sense for arbitrary driving processes and requires some mild approximations to make it a more general statement.

In Section 3, our considerations are complemented by analyzing the steady-state behavior of the algorithm and finally we link all these elements to a strong statement about a large class of linearly filtered random processes of the moving average type. This class naturally includes linearly filtered IID processes but it is even possible to include some particular statistically dependent terms, meaning that SIRPs are also covered. A crucial parameter to describe dynamical as well as stability behavior turns out to be the joint fourth-order moment \(m_{\mathrm {x}}^{(2,2)}=E\left [{x_{k}^{2}}{x_{l}^{2}}\right ]\); for l≠k of the corresponding decorrelated (white)2 driving process. In the case of very long filters, it turns out that even the mild approximations of the driving process are no longer required and thus less conservative step-size bounds are obtained. This is reported in Section 4. Finally, a validation of the theoretical statements is provided in Section 5 by Monte Carlo simulations. Some conclusions in Section 6 round up the paper.

Notation: To further facilitate the reading of the article, a list of commonly used variables and terms is provided in Table 1. The notation A[ x k ] is used to describe a linear operator on a scalar input and A[ x k ] on a vector input. As the linear operator A[ ·] in our contribution is limited to a linear time-invariant filter, it can equivalently be described by a convolution \(A[\!x_{k}]=\sum _{m=0}^{P} a_{m}x_{k-m}\) with the coefficients a m describing the impulse response of the filter. Consequently, \(A[\textbf {\!x}_{k}]=\sum _{m=0}^{P} a_{m} \textbf {x}_{k-m}\). Equivalently, such a convolution can be described by a linear transformation applying an upper right Toeplitz matrix A∈IRM×(M+P) to an input vector x k ∈IR(M+P)×1. In this case, the output vector u k =A x k is of dimension IRM×1. If the vector x k = [x k ,x k−1,…x k−M−P+1]T exhibits a shift property, so does the corresponding output u k = [ u k ,u k−1,…s u k−M+1]T. The variable x k will be denoted for describing a white process throughout the paper while u k will denote the corresponding filtered process throughout this paper. Furthermore, two other linear operators on square matrices will be used: (1) Λ=diag[ L] on a matrix L results in a diagonal matrix Λ whose diagonal entries are identical to the diagonal of L and all other entries are zero; (2) tr[L] on a matrix L results in the trace of the matrix, i.e., \(\text {tr}[\textbf {\!L}]=\sum _{m=1}^{M} \textbf {L}_{\textit {mm}}\). The symbol ⊗ denotes a tensor or Kronecker product.

2 Modal space of the LMS algorithm

Let us consider the classical LMS analysis [8, 10], utilizing the IA as stated in the introduction:

Independence assumption (IA): The regression vector u k is statistically independent of the past regression vectors, i.e., {u k−1,u k−2,…,u 0}.

This assumption was introduced by Ungerboeck [8] and thoroughly investigated by Mazo [14] in the context of adaptive equalizers. A consequence of such an assumption is that parameter estimate w k (as well as parameter error vector \(\tilde {{\mathbf {w}}}_{k}={\mathbf {w}}-{\mathbf {w}}_{k}\)) is independent of u k and thus E[u k u k T(w−w k )(w−w k )T u k u k T]=E [ u k u k T E[ (w − w k )(w − w k )T]u k u k ] = E[ u k u k T K k u k u k T] where the parameter error vector covariance matrix

was applied.3 In the literature, \(\tilde {{\mathbf {w}}}_{k}\) is also often referred to as weight error vector, w k as weight estimate, and K k as weight error vector covariance matrix. The IA holds exactly for the linear combiner case in which the succeeding regression vectors are statistically independent of each other (see, for example, in multiple sidelobe canceller (MSLC) applications [15]) while in practice, the LMS algorithm is mostly run on transversal filters in which the regression vectors exhibit a shift dependency. Note that we will consider the shift structure of the regression vector that is u k = [ u k ,u k−1,…,u k−M+1]T only for its generation process, as it will be generated by linearly filtering random processes over time. The IA in the derivations for analyzing the algorithm will however be required which is not a contradiction to the generation process. The major advantage of the IA is that the evolution of the parameter error covariance matrix K k can be computed and thus the learning curves of the algorithm, i.e., its learning behavior, can be derived. This knowledge also provides the steady-state values and, furthermore, the derivation of practical step-size bounds.

Note that some analyses in the past have attempted to overcome the independence assumption; good overviews on techniques can be found in [16, 17]. In [17, 18], the authors have assumed small step-sizes (much smaller than the largest possible step-size) so that the updates are mostly static compared to the rapid changes in the signals. This approach, however, basically leads to first-order results as the LMS algorithm then behaves similarly to the steepest decent algorithm. In [19, 20], Douglas et al. solved the problem by introducing a linearly filtered moving average (MA) process and formulating all terms in the evolution of the covariance matrix K k explicitly. While the outcomes obtained are precise, the method relies on symbolic computation (MAPLE) and results, even for small problems (filter order M<10), in a very large set of linear equations. The method only provides numerical terms that neither give much insight into the functionality of the algorithm nor provide general analytical statements. Also, the approach in [21, 22] follows along such lines, however with imposing the IA, where a large set of linear equations (of the order M 2×M 2) is required to be computed due to terms of the form \(\textbf {u}_{k}\textbf {u}_{k}^{T} \otimes \textbf {u}_{k}\textbf {u}_{k}^{T}\) and then analyzed. Similar to the previous idea, this method also does not provide analytical insight and becomes tedious if not infeasible for correlated signals as well as large matrices. Finally, Butterweck [18, 23–25] has introduced a novel idea based on wave propagation. He showed that for very long filters (M→∞), the derivation does not require the classic IA. However, several other signal conditions are required (e.g., Gaussian driving process, small step-size, infinite filter order M). Our results in Section 4 will be compared to his results when stability conditions for very long filters are derived. Finally, it should be mentioned that neither do the deterministic approaches [4, 5, 7] require the IA in order to derive step-size bounds, but as in the case of other contributions, they are unable to provide learning curves or steady-state values without imposing the IA. Experimental results have shown that utilizing the IA in FIR filter structures may lead to poor learning behavior estimates under strongly correlated processes but surprisingly accurate values for steady-state values of the parameter covariance matrix. The IA therefore is still frequently used in current analyses of gradient-type algorithms, see, for example, recent publications [3, 26, 27]. Note that our analysis is also based on an infinite filter length approach but different to others, we quantify the error term related to the filter length which allows us to provide rather accurate results even for short filters (e.g., M=10).

In this paper, the classic stochastic approaches from Horowitz and Senne [9] and Feuer and Weinstein [10] are pursued and extended in numerous directions, relying on a general MA driving process similar to that proposed in [19, 20]. Symmetric matrices as they appear in the form of the parameter error vector covariance matrix K k can be decomposed into two complementary subspaces (see [28] and Appendix 1 for details), namely

Here, P(R uu) denotes a polynomial in R uu and \({\mathbf {K}}^{\perp }_{k}\) is an element of its orthogonal complement, i.e., everything in K k that cannot be approximated by P(R uu). Note that the impact of the complement space is only evident in the trace but not in the matrix itself: \({\mathbf {{K}}}_{k}^{\perp }{\mathbf {R}}_{\text {uu}}^{l}\ne {\mathbf {{0}}}\) while tr\(\left [{\mathbf {{K}}}_{k}^{\perp }{\mathbf {R}}_{\text {uu}}^{l}\right ]=0\). It turns out that only members in subspace P(R uu) contribute to the error performance measures (see Eq. (42) for mismatch: \(\text {tr}\left [{\mathbf {{K}}}_{k}{\mathbf {R}}_{\text {uu}}^{0}\right ]\); see (43) for misadjustment: tr[K k R uu]) of the algorithm as only terms of \(\text {tr}\left [{\mathbf {{K}}}_{k}{\mathbf {R}}_{\text {uu}}^{l}\right ]\) are of interest while the complementary part \(\text {tr}\left [{\mathbf {{K}}}_{k}^{\perp }{\mathbf {R}}_{\text {uu}}^{l}\right ]=0\) and thus does not contribute to the performance measures.

In a first step, the following equation

is obtained. Let us start with an example to illustrate its behavior.

Example: A general K 0 will be a linear combination as shown in (5). Take, for example, a fixed system w to be identified. In this case, K 0=w w T. This value can be decomposed into P(R uu) in the modal space of R uu and a component K ⊥ from its orthogonal complement. This also allows the description of the evolution of the individual components; starting with \({\mathbf {K}}_{k}={\mathbf {K}}_{k}^{\|}+{\mathbf {K}}_{k}^{\perp }\), with \({\mathbf {K}}_{k}^{\|}\in {\mathcal {R}}_{\mathrm {u}}\) and \({\mathbf {K}}_{k}^{\perp }\in {\mathcal {R}}_{\mathrm {u}}^{\perp }\), a set of equations is obtained

Parameter error covariance matrix \({\mathbf {K}}_{k}={\mathbf {K}}_{k}^{\|}+{\mathbf {K}}_{k}^{\perp }\) thus evolves into a new matrix \({\mathbf {K}}_{k+1}={\mathbf {K}}_{k+1}^{\|}+{\mathbf {K}}_{k+1}^{\perp }\) in which its part \({\mathbf {K}}_{k}^{\|}\) from \({\mathcal {R}}_{\mathrm {u}}\) stays in the modal space and appears as new part \({\mathbf {K}}_{k+1}^{\|}\), contributing to learning performance terms. Similarly, the perpendicular term \({\mathbf {K}}_{k}^{\perp }\) stays in the complement space as \({\mathbf {K}}_{k+1}^{\perp }\) and will thus not contribute to the algorithm’s performance curves under the trace operation. The noise contributes only to the modal space. This makes it possible to formulate an initial first statement for Gaussian driving processes.

Lemma 2.1.

Assume the driving process to be a correlated Gaussian process with autocorrelation matrix R uu. Under the IA, the initial parameter error vector covariance matrix K 0 of the LMS algorithm evolves (1) into a polynomial in R uu of the modal space of R uu, solely responsible for the mismatch and the misadjustment of the algorithm, and (2) a part in its orthogonal complement which has no impact on the performance measures.

Note that the correlated Gaussian case has been solved for a while [9, 10], typically resulting in a matrix evolution as presented in (7), see, e.g., [13, 29]. However, the term ((8) has never been studied at all. Including uncorrelated IID or SIRP processes required modifications of the method [11, 30]. With the proposed method, we will be able to include arbitrary correlated processes within the same framework.

As such example presented above is somewhat intuitive for the particular case of a Gaussian driving process, what can be said about larger classes of driving processes is of interest. To achieve this goal, a number of considerations with respect to the driving process are required.

Driving process: The properties of Lemma 2.1 are not only maintained by Gaussian random processes but also by a much larger class of driving processes. It will be shown that these properties hold for random processes that are constructed by a linearly filtered white zero-mean random process \(u_{k}=A[\!x_{k}]=\sum _{m=0}^{P} a_{m} x_{k-m}\), whose only conditions are that:

Driving process assumptions (A1a):

Note that the conditions are shown for real-valued processes; for complex-valued processes, they need to be adjusted. The last four conditions (12)–(15) are listed here for completeness. They exclude processes that do not have a zero mean in some sense and have been assumed, although not often explicitly mentioned, in most of the literature. Linearly filtering such processes will preserve the zero-mean properties (12)–(15). These processes certainly include not only real-valued Gaussian and SIRP \(\left (3m_{\mathrm {x}}^{(2,2)} =m_{\mathrm {x}}^{(4)}=3\right)\) and complex-valued Gaussian and SIRP \(\left (2m_{\mathrm {x}}^{(2,2)}=m_{\mathrm {x}}^{(4)}=2\right)\), processes, but also IID processes \(\left (m_{\mathrm {x}}^{(2,2)}=\left (m_{\mathrm {x}}^{(2)}\right)^{2}\right)\). Once vectors x k =[ x k ,x k−1,…,x k−N+1]T have been constructed, the following second- and fourth-order expressions are found:

Correspondingly, the linearly filtered vectors read

with an upper right Toeplitz matrix A of dimension M×N=M×(M+P). The impulse response of the coloring filter is given by a 0,a 1,…a P and appears on every row of A starting with a 0 on its main diagonal. In general, the driving process vector x k is longer than u k , depending on the order P of the impulse response.

However, in order to guarantee independence of vectors u k ,u k−1,… we have to impose a second condition on the driving process:

Driving process assumptions (A1b): Assume the driving process u k being generated by a linearly filtered process x k as described above. We furthermore have to assume that at each time instant k, a new statistically independent process \(\left \{x_{l}^{(k)}\right \}\) is being generated whose values are processed from l=k−N+1…k to generate u k . For two different time instants k 1 and k 2, we assume that their joint pdfs are statistically independent, i.e.,

Note that Assumption A1b is sufficient to avoid the IA but not required. On the other hand, dropping A1b requires the IA to ensure the results of the paper hold. To facilitate reading, we will drop the notation \(\textbf {x}^{(k)}_{l}\to \textbf {x}_{l}\). With such assumptions, we are now ready for our first general statement.

Lemma 2.2.

Assume driving process u k =A[ x k ] to originate from a linearly filtered white random process x k so that u k =A x k with x k = [ x k ,x k−1,…,x k−N+1]T, where A denotes an upper right Toeplitz matrix with the correlation filter impulse response and x k satisfies conditions (9)–(17). The initial parameter error vector covariance matrix \({\mathbf {K}}_{0}={\mathbf {K}}_{0}^{\|}+{\mathbf {K}}_{0}^{\perp }\) of the LMS algorithm essentially (with error of order \({\mathcal {O}}(\mu ^{2})\)) evolves into a polynomial in A A T in the modal space of R uu while terms in its orthogonal complement K ⊥ either remain there or die out.

Note that this formulation may imply that this is only true for linearly filtered processes of the moving average (MA) type. As no condition on the order P of such a process is imposed, P (and thus N=M+P) can become arbitrarily large (e.g., P→∞) and thus autoregressive processes (AR) or combinations (ARMA) can also be resembled as well (e.g., see [13] (chapter 2.7)).

Proof.

The proof proceeds in two steps: first, rewriting (6) for K 0=I in order to discover the most important terms and mathematical steps based on a simpler formulation and then refining the arguments for arbitrary values of K k to K k+1.

For K 0=I and recalling that R uu=E[u k u k T]=A E[x k x k T]A T=A A T, the following is obtained:

On the main diagonal of the M×M matrix A A T, identical elements are found: \(\sum _{i=0}^{P} |a_{i}|^{2}\), thus, \(\text {tr}[{\mathbf {\!A}}^{T}{\mathbf {A}}]=\text {tr}[{\mathbf {\!A}}{\mathbf {A}}^{T}]=M\sum _{i=0}^{P} |a_{i}|^{2}\), with P denoting the filter order of the MA process.

Due to properties (9)–(17) of driving process x k ∈IR

is found, where diag[ L] denotes a diagonal matrix with the diagonal terms of a matrix L as entries. When x k ∈IC, a slightly different result is obtained:

For spherically invariant random processes (including Gaussian), the term \(\left (m_{\mathrm {x}}^{(4)}-3m_{\mathrm {x}}^{(2,2)}\right)\) for real-valued signals, or \(\left (m_{\mathrm {x}}^{(4)}-2m_{\mathrm {x}}^{(2,2)}\right)\) for complex-valued signals, vanishes and thus the problem can be solved classically.

In our particular case, L=A T A∈R (M+P)×(M+P) with \(\text {tr}[{\mathbf {\!A}}^{T}{\mathbf {A}}]=M \sum _{i=0}^{P} |a_{i}|^{2}\) and \(\text {diag}[\!{\mathbf {A}}{\mathbf {A}}^{T}]=\sum _{i=0}^{P} |a_{i}|^{2} {\mathbf {I}}_{M+P} = \frac 1{M}\text {tr}[{\mathbf {\!A}}^{T}{\mathbf {A}}]{\mathbf {I}}_{M+P}\). There still remains one problematic term, however: diag[A T A]. At this point, the following is proposed:

Asymptotic equivalence: \({\lim }_{\textit {M}\to \infty } \frac 1M \left \|\vphantom {\frac {\text {tr}[{\mathbf {A}}^{T}{\mathbf {A}}]}{M} {\mathbf {I}}_{M+P}}\right.\text {diag}[\!{\mathbf {A}}^{T}{\mathbf {A}}]-\left. \frac {\text {tr}[{\mathbf {A}}^{T}{\mathbf {A}}]}{M} {\mathbf {I}}_{M+P}\right \|_{F}=0\) with an identity matrix I M+P of the corresponding dimension. Note that the equivalence would hold exact even for small dimensions if it had the term diag[ A A T] instead of diag[ A T A]. The asymptotic equivalence can be interpreted as replacing each of the diagonal elements of A T A by their average value \(\frac 1{M}\text {tr}[{\mathbf {\!A}}^{T}{\mathbf {A}}]\). Consider the relative difference matrix

of dimension (M+P)×(M+P). Its P diagonal terms at the beginning and end of the diagonal remain unequal to zero while those terms in the middle (whose range can be substantially large if M>P) are zero. The first P elements on the diagonal are, for example, given by \({\mathbf {{\Delta }}}_{\epsilon,ii}=-\sum _{m=i}^{P} |a_{m}|^{2}/\sum _{m=0}^{P} |a_{m}|^{2};i=1..P\). If M≫P, the next elements are all zero and finally, the last P elements are the first in backward notation. In case P>M/2, the zero elements do not occur. We can conclude from the construction of the diagonal elements Δ ε,i i that they are all in the interval (−1,0], or alternatively ∥Δ ε ∥ ∞ ≤1, where we have applied a max norm. Note that for white processes a 0=1 and a i =0;i=1,2,…, thus ∥Δ ε ∥ ∞ =0.

It is worth comparing the long filter derivations by Butterweck [24] that exclude border effects at the beginning and ending of the matrices. Our approximation can therefore be interpreted as being along the same lines of approximations, only originating from a different approach. We however are able to quantify the error term which will further be helpful. Note that in Section 4, this approximation will be dropped entirely for large values of M for different reasons. With this error term Δ ε for real-valued driving processes,

is obtained with a newly introduced pdf shape correction value

a value that depends on the statistic of random process x k . The term \(\frac {m_{\mathrm {x}}^{(4)}}{m_{\mathrm {x}}^{(2,2)}}-3\) is similar to the excess kurtosis \(\frac {E\left [|x-m_{\mathrm {x}}|^{4}\right ]}{E\left [|x-m_{\mathrm {x}}|^{2}\right ]^{2}}-3=\frac {m_{\mathrm {x}}^{(4)}}{\left (m_{\mathrm {x}}^{(2)}\right)^{2}}-3\). Processes with negative excess kurtosis are often referred to as sub-Gaussian processes while a positive excess kurtosis leads to so-called super-Gaussian processes. This (slightly abused) terminology will be used correspondingly to discriminate the term \(\frac {m_{\mathrm {x}}^{(4)}}{m_{\mathrm {x}}^{(2,2)}}-3\). Thus, sub-Gaussian processes in this sense take on γ x values smaller than one while super-Gaussian processes have values larger than one. However, it is also noted that our approximation error Δ ε only has an impact when γ x≠1, which vanishes not only for Gaussian pdfs but also with decreasing step-size μ (which comes along naturally with growing filter order M). If comparing the approximation term \((\gamma _{\mathrm {x}}-1) m_{\mathrm {x}}^{(2,2)} \text {tr}[{\mathbf {\!A}}^{T}{\mathbf {A}}] {\mathbf {{\Delta }}}_{\epsilon }\) with \(\gamma _{\mathrm {x}} m_{\mathrm {x}}^{(2,2)} \text {tr}[{\mathbf {\!A}}^{T}{\mathbf {A}}]{\mathbf {I}}_{M+P}\), we recognize that the approximation is of order 1/M smaller, thus diminishes with higher filter order.

Note further that the term in the LMS algorithm where the asymptotic equivalence applies is proportional to μ 2. It thus has no impact for small step-sizes but certainly is expected to have one on the stability bound. A first conclusion, therefore, is that the error on the parameter error vector covariance matrix due to this approximation is of order \({\mathcal {O}}(\mu ^{2})\). Note that the step-size being small is not to be interpreted as small relative to the step-size at the stability bound but small in absolute terms, i.e., μ≪1, which is certainly given for long filters as μ∼1/M. The consequence that the applied approximation is negligible for small step-sizes (large filter order M) as well as for Gaussian-type processes is reflected in Lemma 2.2 by the wording “essentially.” This means that in extreme cases (large μ (small M) and far from Gaussian), a very small proportion can indeed leak into the complementary space. At the first update with K 0=I,

is obtained, a polynomial in A A T.

Now, the proof starts for general updates from K k to K k+1. While the first terms that are linear in μ are straightforward, the quadratic part in μ needs further attention.

Here, the same asymptotic equivalence concept as used above is imposed, i.e., diag[A T K k A]≈tr[A A T K k ]I M+P /M resulting in

and eventually obtaining

This can now be split into two parts, one in its modal space K ∥ and in its orthogonal complement K ⊥ as in (7) and (8) above, and the following is obtained:

The consequence of this statement is that the parameter error vector covariance matrix is forced by the driving process to remain only in the modal space of the latter. This is not only true for its initial values but also at every time instant k. The components of the orthogonal complement either remain there or die out. This statement will be addressed later in greater detail in the context of step-size bounds for stability. Note also that for complex-valued processes, the only difference in (29) is the occurrence of A A H K k A A H rather than 2A A T K k A A T.

3 Learning and steady-state behavior

As we have already recognized, independent of its statistics, the additional noise term also lies in the modal space of the driving process and thus is entirely responsible for the learning and steady-state behavior. Components of the orthogonal complement thus die out as long as \(0<\mu <1/\left [m_{\mathrm {x}}^{(2,2)}\lambda _{\max }\right ]\), λ max denoting the largest eigenvalue of R uu 4. As we will see later, the step-size bound for the component K ∥ of the modal space is smaller and thus all terms in the orthogonal complement will die out for the step-size range of interest. The learning behavior is thus typically derived based on the modal space, i.e., the terms on the main diagonal of \({\mathbf {K}}^{||}_{k}\). To obtain this, we start with (30) and transform the modal matrices by a unitary matrix \({\mathbf {Q}}{\mathbf {K}}_{k}^{||}{\mathbf {Q}}^{T}=\Lambda _{K_{k}}\) and Q A A T Q T=Q R uu Q T=Λ u. We can now apply a vectorization \(\phantom {\dot {i}\!}\Lambda _{\mathrm {u}}{\mathbf {{1}}}={\mathbf {{\lambda }}}_{\mathrm {u}},\Lambda _{K_{k}}{\mathbf {{1}}}={\mathbf {{\lambda }}}_{K_{k}} \) and obtain the evolution in the modal space as

The eigenvalues of matrix B define the learning speed, in particular the largest eigenvalue λ max(B(μ)). We call

the optimal step-size, causing fastest convergence.

Steady-state behavior: From I−B, steady-state values such as misadjustment can be computed. As terms in the orthogonal complement die out, \({\mathbf {K}}_{\infty }={\lim }_{k\to \infty } {\mathbf {K}}_{k}\) is expected to exist only in the modal space of R uu. Compute the steady-state solution for k→∞ and, omitting the approximation error terms \({\mathcal {O}}(\mu ^{2})\) in the following for simplicity,

is obtained, or equivalently

Since K ∞ exists only in the modal space of A A T, diagonalizing both by the same unitary matrix leads to Q K ∞ Q T=Λ K and Q A A T Q T=Λ u.

Stacking the diagonal values of the matrices into vectors: Λ u 1=λ u,Λ K 1=λ K , λ u=[ λ 1,λ 2,…,λ M ]T, the following is obtained

resulting in the well-known form [11] [Eq. (3.15)]:

with the abbreviation

obtained by employing the matrix inversion lemma [13]: \(\left [P(\Lambda _{\mathrm {u}})+{\mathbf {{\lambda }}}_{\mathrm {u}}{\mathbf {{\lambda }}}_{\mathrm {u}}^{T}\right ]^{-1}{\mathbf {{\lambda }}}_{\mathrm {u}}=1/\left [1+{\mathbf {\lambda }}_{\mathrm {u}}^{T}P^{-1}(\Lambda _{\mathrm {u}}){\mathbf {{\lambda }}}_{\mathrm {u}}\right ] P^{-1}(\Lambda _{\mathrm {u}}){\mathbf {{\lambda }}}_{\mathrm {u}}\). The final steady-state system mismatch is thus given by

and the misadjustment

the only difference with classic solutions for SIRPs [11] being the term γ x that contains influences of the fourth-order moments \(m_{\mathrm {x}}^{(4)}\) and \(m_{\mathrm {x}}^{(2,2)}\) as well as \(m_{\mathrm {x}}^{(2,2)}\) explicitly.

For complex-valued driving processes, the final steady-state system mismatch is given correspondingly by

and the misadjustment

Stability bounds: The step-size bound can now be derived either from (42) or by means of Gershgorin’s circle theorem from the largest eigenvalue of matrix B in (33). The result is identical whichever way is used and conservative for real-valued x k :

Depending on the statistic of the driving process, a more or less conservative bound is obtained. It is worth distinguishing sub- and super Gaussian cases. For sub-Gaussian distributions, γ x<1, the bound grows while for super-Gaussian distributions, γ x>1, the bound decreases. The step-size bound thus varies with the distribution type by approximately tr [ R uu] in the bound (46). For SIRPs (and thus Gaussian) distributions, as well as for very long filters, γ x=1 and thus

This result is identical to (3) for Gaussian processes as \(m_{\mathrm {x}}^{(2,2)}=1\). For complex-valued processes x k , the bounds are obtained in a very similar way, simply replacing the term 2λ max with λ max.

For a real-valued statistically white driving process, an exact bound without Gershgorin’s theorem can be computed, leading to

thus also a significantly larger bound than (3), but still dependent on the distribution by the value of γ x.

Such a result, in particular the lower 2/3 bound in (47) and (3), originating from the correlation of the random process, is conservative. Note that the largest eigenvalue λ max is related to the maximal magnitude of the correlation filter, i.e., λ max≥ maxΩ|A(e jΩ)|2 with equality approaching for M→∞. Thus, for very long filters, the energy (tr[R uu]) will not be entirely concentrated around its peak magnitude, unless we intended to build an oscillator. With this argument, the term λ max can be omitted for the long filter (see also [29] Eq. (10.4.40)), and a much larger bound is obtained:

We will return to the discussion of stability bounds in the context of simulation results in Section 5.

4 Very long adaptive filters

An analysis for very long filter orders M has been considered by Butterweck [18, 23–25], however, in the context of avoiding the IA. The previously introduced term \(\gamma _{\mathrm {x}}=\left (1+\left (m_{\mathrm {x}}^{(4)}/m_{\mathrm {x}}^{(2,2)}-3\right)/M\right)\) already hints towards γ x=1 for very large filter orders M. But note that the derivation in the previous sections required a further asymptotic equivalence result that can be traded against a much simpler asymptotic equivalence result for the long filter case. This will provide us with a second opinion at least for the special case of very long filters that should be in agreement with our findings so far.

The main reason for eliminating our first asymptotic equivalence lies in the fact that for very long linear filters, it is well known that Toeplitz matrices A behave in an asymptotically equivalent manner to circulant matrices [31], say C. Rather than A A T, now, C C T=R uu and the diagonalization is achieved by DFT matrices F∈ICM×M: \({\mathbf {C}}={\mathbf {F}}^{H}\Lambda _{\mathrm {u}}^{1/2}{\mathbf {F}}\) and thus F H Λ u F=R uu.

Theorem 4.1.

Assuming driving process u k =C[ x k ] to originate from a linearly filtered white random process x k according to (9)–(17) by cyclic filtering, i.e., u k =C x k with circulant matrix C∈IRM×M. Then, any initial parameter error covariance matrix K 0 evolves only in the modal space of R uu, now defined by DFT matrices F∈ICM×M.

Proof.

Following the fact that circulant matrices can be diagonalized by DFT matrices, say F, reconsider the term E[u k u k T K k u k u k T], remembering that a process linearly filtered by a unitary filter F for very long filters preserves its properties (see Appendix 2 for proof) at the output of the filter. It is found that

In the last line, we assumed that K k is also circulant. But similar to the considerations in Section 2, we could start from an initial parameter error covariance matrix K 0 that is not circulant, e.g., K 0=w w T. In this case, K 0 can be separated in one part that lies in the modal space, defined by F, and one part from its complement space. As the part in the complement space is no longer excited, it will follow the evolution as described in (32) and dies out.

Note that the filter is now being formulated in terms of a complex-valued driving process f k =F x k even though x k ∈IRM×1. Noticing that the center term of the last equation is of diagonal form, and simplifying the terms to

with the particular solution \({\mathbf {{L}}}=\Lambda _{\mathrm {u}}^{1/2} \Lambda _{{\mathbf {K}}_{k}} \Lambda _{\mathrm {u}}^{1/2}\) and the property \(\text {tr}[{\mathbf {{\!L}}}]=\text {tr}\left [\Lambda _{\mathrm {u}}^{1/2} \Lambda _{{\mathbf {K}}_{k}} \Lambda _{\mathrm {u}}^{1/2}\right ]=\text {tr}[\!\Lambda _{\mathrm {u}} \Lambda _{{\mathbf {K}}_{k}}]\). Note that \(m_{\mathrm {f}}^{(4)}=2m_{\mathrm {f}}^{(2,2)}\) as shown in Appendix 2, m f denoting the corresponding moments of the driving process f k . The parameter error vector covariance matrix in transformed form \(\Lambda _{{\mathbf {K}}_{k+1}}={\mathbf {F}}{\mathbf {K}}_{k+1}{\mathbf {F}}^{H}\) now evolves as

This shows that for very large filter orders, our previous considerations hold exactly and the parameter error vector covariance matrix K k indeed remains in the modal space of the driving process u k defined by the DFT matrices F∈ICM×M.

Steady-state behavior: The steady-state values are obtained for k→∞, i.e.,

Reshaping the diagonal terms into vectors Λ K 1=λ K leads to

and finally by applying the matrix inversion lemma

with

The final steady-state system mismatch is thus given by

and the misadjustment reads

Note that this result for the long filter depends only on the joint moment \(m_{\mathrm {f}}^{(2,2)}\) of the DFT of the driving process. As shown in the Appendix, for most distributions, this moment takes on the same value. This explains why the long LMS filter behaves more or less identically, independently of the driving process, as long as the correlation is the same. The interested reader may like to compare this with an older publication by Gardner [30] in which the very similar fourth-order moment \(m_{\mathrm {x}}^{(4)}\) was emphasized for purely white driving processes.

Stability bounds: The last equation in turn results in the conservative step-size bound

The step-size bound for the very long filter appears considerably larger (by one third when compared to (47)) than that for short lengths. Following the same argument for long filters as at the end of the previous section, we can argue that λ max is small compared to tr[ R uu] and obtain the even larger bound

which is identical to (49) as for long filters \(m_{\mathrm {x}}^{(2,2)}=m_{\mathrm {f}}^{(2,2)}\).

An alternative bound is also possible now. As the eigenvalues λ i are simply originating from the circulant matrices C that linearly filter the driving process, they are obtained by a DFT on the filter matrices C C T, or equivalently correspond to the powers of the spectrum of u k at equidistant frequencies 2π/M, allowing an alternative bound for λ max≥ maxΩ|C(e jΩ)|2, both being identical for large M. For the long filter, we thus find

This more conservative bound corresponds to the spectral variations in the driving process while the former bound including the trace term focuses more on the gain of the correlation filter. A similar (even more conservative) bound based on the power spectrum of the driving process has already been proposed by Butterweck [24] and reads in our notation

The bound was derived for the long filter without IA but under Gaussian processes for which \(m_{\mathrm {f}}^{(2,2)}=m_{\mathrm {x}}^{(2)}\), following a wave-theoretical argument. As for long filters maxΩ|C(e jΩ)|2=λ max(C C H)=∥C C H∥2,in d , Butterweck’s result appears conservative. It is nevertheless reassuring to learn that classic matrix approaches lead to very similar results even though the Butterweck’s analysis is based on Gaussian processes and thus the fourth-order moment \(m_{\mathrm {f}}^{(2,2)}\) is not accounted for. In particular, this plays a crucial role for non-Gaussian spherically invariant processes.

5 Validation by simulation

In this section, we validate our theoretical findings in terms of convergence bounds and steady-state behavior of the LMS algorithm by Monte Carlo (MC) simulations. Table 2 below lists six typical zero-mean distributions and corresponding second- and fourth-order moments. The corresponding value before and after the DFT (top and bottom, respectively) is shown for each process. While the first line provides m x terms, the second exhibits the corresponding m f terms of the complex-valued process after the DFT, according to the relations described in Appendix 2. Note that the values m f are provided for large values of the filter order M so that γ x=1 (see also (76) in the Appendix). The first two are classic Gaussian distributions in either IR or IC. The next three are IID, whether bipolar, uniform, or a product of two independent Gaussian processes (Gauss2). Finally, an SIRP originating from a mixture of two Gaussian processes is presented. While the first two IID processes are sub-Gaussian (γ x<1), the last IID process as well as the SIRP are super-Gaussian (γ x>1).

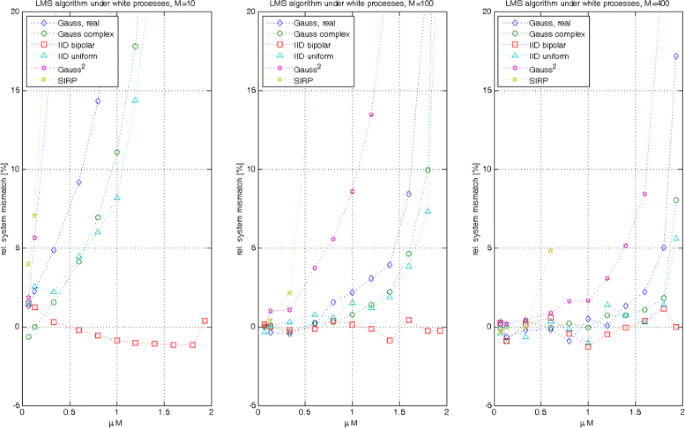

All MC simulations are run on linear combiners and thus satisfy the driving process assumptions A1a and A1b. The M taps of the system w to identify were selected randomly from \({\mathcal {N}}(0,1)\), a fresh selection being made for each MC run. Simulations were run with filter length M={10,100,400} and step-sizes μ={1,2,5,9,12,15,18,21,24,27,29}×1/[15M], thus ranging up to the largest possible stability bound 2/M. The driving process had unit variance (\(m_{\mathrm {x}}^{(2)}=1\)) and the additive noise was Gaussian with variance 10−4.

In a first step, we analyzed the number of MC runs required for averaging in order to obtain consistent results. Here, the findings of [32] are a great help as they propose to analyze the mismatch fluctuations, defined as

a value that increases, when μ approaches the stability bound. Based on experiments with relatively large step-sizes, we decided that 1 000 MC runs provide sufficiently good results. For long filters, the fluctuations become considerably smaller, and 100 MC runs appear sufficient. We thus applied {1 000,1 000,100} averages for M={10,100,400}, respectively, to keep simulation times manageable. The observation \(\bar {M}_{\mathrm {S}}\) for the steady-state system mismatch is obtained by ensemble averaging over the number of MC runs and finally averaging the last 10 % values over time. As the numbers thus obtained are typically very close to the predicted values, the relative error in system mismatch \(({M}_{\mathrm {S}}-\bar {M}_{\mathrm {S}})/{M}_{\mathrm {S}}\) is computed and plotted as a percentage.

Experiment 1: In the first set of experiments, the white processes with properties in Table 2 were the driving source. The outcome of such experiments provides a validation to the predicted steady-state behavior. For the long filter, (58) simplifies to

while (42) for the arbitrary LMS algorithm simplifies to the same expression with \(m_{\mathrm {x}}^{(2,2)}\) replacing \(m_{\mathrm {f}}^{(2,2)}\). This is in agreement with (76) (see Appendix 2) as for large filter order M; both terms become identical. Such simplified formula is often applied in practice. As we expect differences only if the step-size is large, the precision of the formula is investigated in this experiment.

Figure 1 presents the relative errors \(1-\frac {\bar {M}_{\mathrm {S}}}{M_{\mathrm {S}} }\) obtained, in percentage terms when applying (65).

The results are as follows:

-

1.

In all distributions, the errors obtained for small step-sizes are very small, confirming our theoretical predictions.

-

2.

The larger the filter length M, the smaller the errors. This is expected as formula (65) was not only derived for long filters but also for small step-sizes, which are unavoidable for large filter lengths.

-

3.

Only in the case of M=10, for which a significant γ x≠1 is expected, the errors are moderate to large compared to the other cases.

-

4.

As expected, the errors are higher for processes that are the furthest from Gaussian (see, for example, Gauss2) and of large μ (small filter length M). The results in the figure clearly show the impact of our asymptotic equivalence assumption, as the mismatch decreases with smaller step-sizes (which is a consequence of higher filter order M).

-

5.

Even for the SIRPs, our prediction is excellent. Note, however, that this depends very much on how an SIRP is modeled. A K 0 SIRP is generated by a product process of a Gaussian process with a random variable that is itself Gaussian distributed. If this RV, serving as the standard deviation of the process and defining its variance, is kept constant for each simulation run, the ensemble average will not converge. As the RV can take on arbitrarily large values (high energy driving process), the adaptation process can become extremely slow for some runs due to its fixed step-size. But even worse, as there is a finite probability that the variance of this process is below any bound, a fixed step-size is then too large for these runs to become stable. Such SIRP processes are only possible in the context of the normalized LMS (NLMS) algorithm. The situation is considerably better if the RV that defines the variance of the random process is itself generated by a random process, producing a slight change at every time instant so as to slowly modify the signal’s variance over time, as is commonly done to resemble speech signals with their slowly changing variance. Note that for SIRPs, \(m_{\mathrm {x}}^{(2,2)}=3\), restricting the step-size range considerably (the stability bound is roughly at μ lim M=2/3).

The step-size range in Fig. 1 is limited to μ={1,…,29}×1/[15M]. The problem with higher step-size values is that the fluctuations become larger and thus significantly longer averaging is required (see also [32] for explanations of this effect), this not being feasible. In particular for short filters (M=10), the convergence bounds are alternated by γ x and thus the classical bound (3) is still too high. This can be observed very clearly for the IID Gauss2 process, which leads to extremely high errors, when operating in close vicinity to the stability bound.

Experiment 2: We repeat the previous experiment, but we compare the results with the much more precise bound derived from (42) for a white driving process (real-valued):

In case the process is complex-valued, the bound (44) simplifies to a similar expression as (66); just the term M−1 in the denominator is to be replaced by M−2.

Figure 2 depicts the results. The major difference now is that the precision becomes much higher even for those processes that are far away from Gaussian (for example Gauss2). No matter what distribution or length of the filter, the error obtained remains below roughly 1 % as long as the step-size does not reach the stability bound.

We compare now our observations for the predicted stability bound (48), which reads in our case for real-valued processes:

We recognize that for large M, we find \(\mu _{\text {lim}}M=2/m_{\mathrm {x}}^{(2,2)}\), thus 2 in general and 2/3 for SIRPs. For small values of M, the situation is different and we obtain μ lim M={10/6=1.66,20/11=1.81,2,20/10.8=1.85,10/9=1.11,5/9=0.55} for our six processes, an excellent agreement when compared to the left hand side of Fig. 2.

Experiment 3: In a last set of experiments, the previous simulation runs from Experiments 1 and 2 were repeated for correlated driving processes. We applied a linear filter with impulse response

on the six driving processes of the previous experiment. The so-obtained AR(1) process with unit gain exhibits a relatively high correlation as it is common in speech processes. If as in this case, the filter \(A(q^{-1})=\frac {0.6}{1-0.8q^{-1}}\) becomes very long (P→∞), the largest eigenvalue becomes small compared to tr[ R uu]. Thus, the convergence bound for μ becomes practically \(2/\left (m_{\mathrm {f}}^{(2,2)}\text {tr}[\textbf {\!R}_{\text {uu}}]\right)\), which agrees well with our simulation results. Note that such bound can be much larger as well as much smaller than the classic bound in (3), depending on the value of \(m_{\mathrm {f}}^{(2,2)}\). In Fig. 3, we compare our simulation results with the predicted values from (42) and (44), for real- and complex-valued processes, respectively. As before, we find excellent agreement in the order of 1 % error. For a small filter length M=10, the largest eigenvalue becomes λ max=5.5 and the predicted stability bounds are reduced from μ lim M={1.66,1.81,2,1.85,1.11,0.55} of the white driving processes to μ lim M={0.95,1.29,1.05,1.01,0.74,0.31} which is well reflected in the simulation results. For larger filters, the stability bound moves towards μ lim M→2 (2/3 for SIRP) for all processes, independent of the correlation. For M=400, the largest eigenvalue has only slightly increased to λ max=8.9 and thus has little influence on the stability bound.

We finally are interested in the fluctuations of the various runs (see [32]), defined in (64), which are evaluated at steady state now:

As expected, the fluctuations, depicted in Fig. 4, increase considerably only for step-sizes close to the stability bound. For the very long filter, such bound was correctly predicted in (61), independent of pdf and correlation.

6 Conclusions

In this contribution, a stochastic analysis of second-order moments in terms of the parameter error covariance matrix has been shown for the LMS algorithm under the large class of linearly filtered random driving processes. While results were previously only known for a small number of statistics, this contribution deals with the large class of linearly filtered white processes with arbitrary statistics. Particularly interesting is the fact that the parameter error covariance matrix is essentially being forced to remain in the modal space of the driving process, defined by its autocorrelation matrix R uu. Such a property was shown to be independent of the correlation and the pdf of the driving process. Next to the independence assumption, an asymptotic equivalence is required for this derivation, producing minor errors only for large step-size (short filters) and pdfs that are far from Gaussian. The error term on the other hand also quantifies and allows to accurately predict the filter behavior even for small filters. Alternatively, for very long filters, an even simpler asymptotic equivalence guarantees exact results. The result for the very long filter is particularly interesting as it shows a more relaxed step-size bound compared to the short filter. Moreover, an alternative step-size bound that includes the maximum gain of the spectral filter rather than the trace of the autocorrelation matrix is provided, this potentially being a more practical solution. It is shown that the joint moment \(m_{\mathrm {x}}^{(2,2)}\) of the decorrelated process is more relevant than the term \(m_{\mathrm {x}}^{(2)}\) for the steady-state prediction even though it has no impact for most typical processes. Furthermore, a correction factor γ x occurs in the step-size bound, describing the influence of having either short filters or non-Gaussian driving processes. These results may appear less practical than previous ones since more details regarding the statistics of the driving process now need to be known, but note that also for classic results, the second-order moments, i.e., the autocorrelation matrix, needed to be known a priori (or estimated). Bringing in more parameters is the price of having a more general form of theory.

7 Endnotes

1 Naturally, the basic LMS algorithm requires modification to apply it successfully in various applications; we simply refer here to the entire family with similar algorithmic structure whose core elements are the LMS algorithm. Worth mentioning is in any case the normalized version NLMS of the LMS algorithm that ensures independence of the input’s signal power. Such small modification alone, however, already complicates the analysis substantially [11, 33]. Even for very long filters, non-Gaussian excitation such as speech signals can lead to severe problems [34] if no normalization is applied.

2 The terms white and decorrelated will henceforth be used interchangeably.

3 Note that the requirement of having statistically independent regression vectors is actually far too strong as we only need to ensure that E[u k u k T(w−w k )(w−w k )T u k u k T]=E[u k u k T K k u k u k T]. One could also call it independence approximation and just require this property.

4 The procedure for obtaining such result is the same as explained in the following paragraph for K ∥, only much simpler, as the trace terms do not appear.

8 Appendix

8.1 Appendix 1: decomposition of symmetric matrices

A problem in the derivation of the LMS behavior is that the covariance matrices as they appear for the parameter error vector K k =E[(w−w k )(w−w k )T] are in general not in the modal space of the driving process u k =[u k ,u k−1,…,u k−M+1]T with autocorrelation matrix R uu=E[ u k u k T]=Q Λ u Q T thus

Since the derivation of the LMS algorithm only requires that the trace of such matrices be known, it is sufficient to analyze only the algorithm’s impact on the parameter error vector with respect to R uu. It is therefore proposed to decompose a given matrix K into a first part, i.e., in the modal space of the autocorrelation matrix R uu of the driving process u k and a second part in its orthogonal complement space, i.e.,

Here, P(R uu) denotes a polynomial in R uu. Note that due to the Cayley-Hamilton theorem, an exponent larger than M−1, with M denoting the system order, is not required [28].

Lemma 1.

Any symmetric matrix K can be decomposed into a part from the subspace of a given modal space \({\mathcal {R}}_{\mathrm {u}}=\text {span}\left \{{\mathbf {I}},{\mathbf {R}}_{\text {uu}},{\mathbf {R}}_{\text {uu}}^{2},\ldots,{\mathbf {R}}_{\text {uu}}^{M-1}\right \}\) and its orthogonal complement subspace \({\mathcal {R}}_{\mathrm {u}}^{\perp }\) for which \(\text {tr}\left [{\mathbf {K}}^{\perp }{\mathbf {R}}_{\text {uu}}^{l}\right ]=0\) for any value of l=0,1,2,….

Proof.

The optimal set of coefficients for approximating the symmetric matrix K is found by

which is a simple quadratic problem with linear solution:

The trace of \({\mathbf {R}}_{\text {uu}}^{0}={\mathbf {I}}\) is simply M, the system order. Due to the orthogonality property of the least squares solution, it is found that

for arbitrary values of l=0,1,2,….

As a further consequence terms of the form \(\text {tr}\left [{\mathbf {R}}_{\text {uu}}^{m}{\mathbf {K}}^{\perp }{\mathbf {R}}_{\text {uu}}^{l}\right ]=0\), and thus \({\mathbf {R}}_{\text {uu}}^{m}{\mathbf {K}}^{\perp }{\mathbf {R}}_{\text {uu}}^{l}\in {\mathcal {R}}_{\mathrm {u}}^{\perp }\), and any polynomial \(P({\mathbf {R}}_{\text {uu}})\in {\mathcal {R}}_{\mathrm {u}}\). Note that it is straightforward to extend the results to Hermitian matrices that occur for complex-valued processes.

8.2 Appendix 2: relation to higher order moments

Here, the second- and fourth-order moments are considered once a random process is Fourier-transformed by a unitary matrix F. Assume a random process x k with the properties (9)–(17). Take M consecutive values of such process, build a vector x k , and convert it to its Fourier transform by f k =F x k .

It is straightforward to show that the process f k is zero mean if x k is zero mean and, due to the unitary property of F, it is found that \(m_{\mathrm {f}}^{(2)}=E[\!|f_{k}|^{2}]=1\) as long as \(m_{\mathrm {x}}^{(2)}=E[\!|x_{k}|^{2}]=1\).

For the fourth-order moments, the expression E[x k x k T g h H x k x k T] is considered, where g and h are simply two different rows of F, thus g H h=g T h=0 and g H g=h H h=1. By L=g h H in (21), it is found that

From here, the following can be computed:

The latter relation is simply due to the fact that each element of the DFT matrix F is a rotation scaled by \(1/\sqrt {M}\). For M→∞, it is concluded that \(m_{\mathrm {f}}^{(2,2)}=m_{\mathrm {x}}^{(2,2)}\). The result would not change if x k ∈IC. It is in fact this equivalence that convinced us to use m (2,2) rather than m (4) in our formulations. Note also that the term γ x from (25) shows up here again. It is thus the correction term for the joint fourth-order moment that only has an impact on small filter dimensions.

Similarly, for the fourth-order moment, select g=h and obtain \(m_{\mathrm {f}}^{(4)}\le 2 m_{\mathrm {x}}^{(2,2)}+\left (m_{\mathrm {x}}^{(4)}-3m_{\mathrm {x}}^{(2,2)}\right) \frac 1M\) and again for large M, \(m_{\mathrm {f}}^{(4)}= 2 m_{\mathrm {x}}^{(2,2)}\) remains, also independently of whether x k is from IR or IC. Finally, \(E[f_{k}f_{l}f_{m}f_{m}]\le \left (m_{\mathrm {x}}^{(4)}-3m_{\mathrm {x}}^{(2,2)}\right) \frac 1M\) once k≠l≠m and thus for large M: E[f k f l f m f m ]→0. The properties of the driving process are thus preserved under DFT for very long filters. Furthermore, regardless of the input process, after DFT of the long sequence, it is always found that \(m_{\mathrm {f}}^{(4)}=2m_{\mathrm {f}}^{(2,2)}\), which significantly simplifies subsequent analysis.

References

B Widrow, ME Hoff Jr, in IRE WESCON Conv. Rec, Part 4. Adaptive switching circuits, (1960), pp. 96–104.

E Hänsler, G Schmidt, Acoustic Echo and Noise Control (John Wiley & Sons, Chichester, New York, Brisabne, Toronto, Singapore, 2004).

M Rupp, Convergence properties of adaptive equalizer algorithms. IEEE Trans. Signal Process.59(6), 2562–2574 (2011).

AH Sayed, M Rupp, in Proc. SPIE 1995. A time-domain feedback analysis of adaptive gradient algorithms via the small gain theorem (San Diego, USA, 1995), pp. 458–469.

M Rupp, AH Sayed, A time-domain feedback analysis of filtered-error adaptive gradient algorithms. IEEE Trans. Signal Process.44(6), 1428–1439 (1996). doi:http://dx.doi.org/10.1109/78.506609.

AH Sayed, M Rupp, Error-energy bounds for adaptive gradient algorithms. IEEE Trans. Signal Process.44(8), 1982–1989 (1996). doi:http://dx.doi.org/10.1109/78.533719.

AH Sayed, M Rupp, in The DSP Handbook. Robustness issues in adaptive filtering (CRC PressFlorida, 1998).

G Ungerboeck, Theory on the speed of convergence in adaptive equalizers for digital communication. IBM J. Res. Dev.16(6), 546–555 (1972).

LL Horowitz, KD Senne, Performance advantage of complex LMS for controlling narrow-band adaptive arrays. IEEE Trans. Signal Process.29:, 722–736 (1981).

A Feuer, E Weinstein, Convergence analysis of LMS filters with uncorrelated Gaussian data. IEEE Trans. Acoust. Speech Signal Process.ASSP–33(1), 222–230 (1985).

M Rupp, The behavior of LMS and NLMS algorithms in the presence of spherically invariant processes. IEEE Trans. Signal Process.41(3), 1149–1160 (1993).

M Rupp, Adaptive filters: Stable but not convergent. EURASIP J. Adv. Signal Process. (2015).

S Haykin, Adaptive Filter Theory (Prentice–Hall, Inf. and System Sciences Series, Englewood Cliffs, NJ, 1986).

JE Mazo, On the independence theory of equalizer convergence. Bell Syst. Technical J.58(5), 963–993 (1979).

R Nitzberg, Normalized LMS algorithm degradation due to estimation noise. IEEE Trans. Aerospace Electron. Syst.AES-22(6), 740–750 (1986). doi:http://dx.doi.org/10.1109/TAES.1986.310809.

O Macchi, Adaptive Processing (John Wiley & Sons, Chichester, New York, Brisabne, Toronto, Singapore, 1995).

V Solo, X Kong, Adaptive Signal Processing Algorithms. Prentice-Hall information and system sciences series (Prentice-Hall, Englewood Cliffs (NJ), USA, 1995).

H-J Butterweck, in International Conference on Acoustics, Speech, and Signal Processing (ICASSP-95), 2. A steady-state analysis of the LMS adaptive algorithm without use of the independence assumption, (1995), pp. 1404–1407. doi:http://dx.doi.org/10.1109/ICASSP.1995.480504.

SC Douglas, TH-Y Meng, in Proc. IEEE International Conf. on Acoustics, Speech, and Signal Processing. Exact expectation analysis of the LMS adaptive filter without the independence assumption (San Francisco, CA, 1992), pp. 61–64.

SC Douglas, W Pan, Exact expectation analysis of the LMS adaptive filter. IEEE Trans. Signal Process.43(12), 2863–2871 (1995). doi:http://dx.doi.org/10.1109/78.476430.

TY Al-Naffouri, AH Sayed, Transient analysis of data-normalized adaptive filters. IEEE Trans. Signal Process.51(3), 639–652 (2003). doi:http://dx.doi.org/10.1109/TSP.2002.808106.

AH Sayed, Fundamentals of Adaptive Filtering (John Wiley & Sons, Inc., Hoboken (NJ), USA, 2003).

H-J Butterweck, Iterative analysis of the steady-state weight fluctuations in LMS-type adaptive filters. IEEE Trans. Signal Process.47(9), 2558–2561 (1999).

H-J Butterweck, A wave theory of long adaptive filters. IEEE Trans. Circ. Syst. I: Fundamental Theory Appl.48(6), 739–747 (2001).

H-J Butterweck, Steady-state analysis of the long LMS adaptive filter. Signal Process. Elsevier. 91(4), 690–701 (2011). doi:http://dx.doi.org/10.1016/j.sigpro.2010.07.015.

SJM de Almeida, JCM Bermudez, NJ Bershad, A stochastic model for a pseudo affine projection algorithm. IEEE Trans. Signal Process.57:, 117–118 (2009).

HIK Rao, B Farhang-Boroujeny, Analysis of the stereophonic LMS/Newton algorithm and impact of signal nonlinearity on its convergence behavior. IEEE Trans. Signal Process.58(12), 6080–6092 (2010). doi:http://dx.doi.org/10.1109/TSP.2010.2074198.

M Rupp, H-J Butterweck, in Proc. of the 37th Asilomar Conference, 1. Overcoming the independence assumption in LMS filtering, (2003), pp. 607–611.

DG Manolakis, VK Ingle, SM Kogon, Statistical and Adaptive Signal Processing (Artech House, Boston, London, 2005).

WA Gardner, Learning characteristics of stochastic gradient descent algorithms: a general study, analysis and critique. Signal Process.6(2), 113–133 (1984).

RM Gray, Toeplitz and Circulant Matrices: A Review. Foundations and Trends in Communications and Information Theory, vol. 2 (Now publisher, Delft, 2006).

VH Nascimento, AH Sayed, On the learning mechanism of adaptive filters. IEEE Trans. Signal Process.48(6), 1609–1625 (2000). doi:http://dx.doi.org/10.1109/78.845919.

NJ Bershad, Analysis of the normalized LMS algorithm with Gaussian inputs. IEEE Trans. Acoust. Speech Signal Process.34(4), 793–806 (1986).

M Rupp, Bursting in the LMS algorithm. IEEE Trans. Signal Process.43(10), 2414–2417 (1995).

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The author declares that he has no competing interests.

Authors’ information

Markus Rupp received his Dipl.-Ing. degree in 1988 at the University of Saarbrücken, Germany, and his Dr.-Ing. degree in 1993 at the Technische Universität Darmstadt, Germany, where he worked with Eberhardt Hänsler on designing new algorithms for acoustical and electrical echo compensation. From November 1993 until July 1995, he had a postdoctoral position at the University of Santa Barbara, CA, with Sanjit Mitra where he worked with Ali H. Sayed on a robustness description of adaptive filters with impact on neural networks and active noise control. From October 1995 until August 2001, he was a member of Technical Staff in the Wireless Technology Research Department of Bell-Labs at Crawford Hill, NJ, where he worked on various topics related to adaptive equalization and rapid implementation for IS-136, 802.11 and UMTS. Since October 2001, he is a full professor for Digital Signal Processing in Mobile Communications at the Vienna University of Technology where he founded the Christian-Doppler Laboratory for Design Methodology of Signal Processing Algorithms in 2002 at the Institute of Communications and RF Engineering. He served as Dean from 2005 to 2007 and from 2016-2017. He was an associate editor of IEEE Transactions on Signal Processing from 2002 to 2005, is currently an associate editor of JASP EURASIP Journal of Advances in Signal Processing, JES EURASIP Journal on Embedded Systems. He is an elected AdCom member of EURASIP since 2004 and serving as the president of EURASIP from 2009 to 2010. He authored and co-authored more than 500 papers and patents on adaptive filtering, wireless communications, and rapid prototyping, as well as automatic design methods. He is a Fellow of the IEEE.

Additional file

Additional file 1

MATLAB code. The MATLAB code for the experiments is publicly available at https://www.nt.tuwien.ac.at/downloads/. (ZIP 60 kb)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Rupp, M. Asymptotic equivalent analysis of the LMS algorithm under linearly filtered processes. EURASIP J. Adv. Signal Process. 2016, 18 (2016). https://doi.org/10.1186/s13634-015-0291-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13634-015-0291-1